Accurate log analytics – for the greater good of devs

In today's digital world, businesses generate enormous amounts of machine data from logs and events from different parts of the technology stack. This data can provide crucial analytics and insights into applications, infrastructure, and business performance. Businesses require better log analytics to make sense of the data, which can offer faster troubleshooting, proactive monitoring, and insightful data analysis and reporting. According to a MarketsandMarkets research study, the Log Management Market is anticipated to expand from USD 2.3 billion in 2021 to USD 4.1 billion by 2026 at a Compounded Annual Growth Rate (CAGR) of 11.9% from 2021 to 2026. In this blog, I will explain why accurate and intelligent log analytics is essential, the challenges of managing vast amounts of log data, and best practices for getting accurate log analytics.

Why is better log analytics needed?

Log analytics aims to ensure that the collection of log data is useful for the organization. It provides multiple benefits that assist organizations in maximizing application performance and enhancing cybersecurity. However, these advantages can only be obtained if log analytics are performed effectively and efficiently.

- Determine root cause faster on high cardinality logs: High cardinality logs refer to logs containing many unique values for a given field or attribute. For example, logs that track user activity on a website might have high cardinality for the user ID field. Logs from applications, infrastructure, and networking also often contain high cardinality data like unique IP addresses, session ids, and instance ids. They can be challenging to store, convert, and analyze as metrics because traditional analysis methods may not provide enough insight into the data. Accurate log analytics tools, on the other hand, can handle the complex and diverse data structures found in high cardinality logs and provide deeper insights into the data. These tools can help identify patterns, anomalies, and other vital information that might otherwise go unnoticed by centrally collecting the data, sorting through it, and providing tools to make sense of it. Accurate log analytics can also improve operational efficiency by helping identify issues and errors in real-time. This allows teams to quickly respond to problems and prevent them from escalating into more significant ones.

- Gather business insights from log data: Log analytics reduces the need for multiple tools by reducing data silos. The same log data can also be used, for example, to gain insights that are useful for business operations teams. It enables real-time monitoring of business operations to better understand business performance, including identifying patterns and tracking KPIs. With better visibility into operations, organizations can make more informed decisions that drive growth and profitability. Organizations can gain valuable insights into customer behavior and preferences by analyzing customer logs. This helps tailor products and services and meet customer needs, increasing customer satisfaction and loyalty.

- Proactive monitoring: Proactive monitoring is one of the critical benefits of log analytics. With better log analytics, IT teams can continuously monitor and view application performance, system behavior, and any unusual activity across the entire application stack. This provides the opportunity to eliminate issues before they affect performance. By leveraging best practices for log analytics, organizations can improve operational efficiency, reduce downtime, and enhance the overall user experience. Log analytics tools can be configured to send real-time alerts based on pre-defined thresholds in the log data. Log analytics can identify correlations between different events or data points, pinpointing the root cause of issues. By consolidating log data across other systems and applications into a single location, log analytics tools make it easier for IT teams to monitor and troubleshoot issues across the entire application stack.

- Troubleshooting: Troubleshooting is a systematic method of problem-solving. It is used first to detect and then rectify issues across systems. A sound log analytics system helps unify, aggregate, structure, and analyze log data to provide the opportunity for advanced troubleshooting. It gives you a baseline of all your log data as it is received, which lets you gain insight before setting up a single query. Event logs accurately describe what happened and provide relevant information like timestamps and error messages while automatically omitting other causes through a simple elimination system. With this level of insight, you can trace issues to their root cause while seeing how components interact to help identify correlations. You can also view the surrounding events that occurred just before or after a critical event and more effectively pinpoint the problem.

- Security/Compliance: The importance of log analysis in addressing security and compliance concerns cannot be overstated. One of the primary reasons why organizations should care about log analysis is conducting forensics during investigations to understand and respond to security incidents such as data breaches. In the event of a suspicious activity or security incident, logs can provide valuable information to help organizations understand what happened and how to prevent similar incidents from occurring. By analyzing logs, organizations can identify and determine the extent of the breach and take steps to prevent similar incidents from occurring. This information can also be used to support legal and regulatory compliance requirements. For example, in 2019, Capital One suffered a massive data breach that affected over 100 million customers in the United States and Canada. The breach was caused by a misconfigured firewall in Capital One's cloud infrastructure, which allowed an attacker to access customer data stored in an Amazon Web Services (AWS) S3 bucket. By analyzing log data from various sources, such as servers, applications, and network devices, the organization could identify the indicators of compromise and determine the extent of the breach.

Challenges of managing vast amounts of log data

Businesses face two main challenges when managing vast amounts of log data. One challenge is the state of the environments themselves because modern business environments are increasingly distributed. The second challenge is the data size challenge, where it feels like finding a needle in a haystack while ensuring you have enough relevant information. These both can get quite daunting. Let us break them down further to understand the challenges.

- Volume: To meet security standards, a company must thoroughly review all its logs using a log analyzer. However, this can be a difficult task, especially for large log data volumes, as it may burden IT resources. Traditional log monitoring tools, especially, may not be as efficient as desired.

- Variety in formats: Logs may come in different formats depending on their source, making it challenging to conduct log analytics. Most log monitoring solutions use a standard format, but not all logs can comply with it. So, even if a standard log format is used, some log data may get lost or not be accounted for during analysis. This means more time and effort are required to extract and interpret vital information appropriately.

- Accessibility: IT teams must ensure that logs can be captured, categorized, and automatically discovered in a log management platform for easy accessibility. To facilitate greater access, it is essential to categorize, timestamp properly, and index the logs. A query-based search allows stored logs to be easily searched and filtered through from a centralized location. If these prerequisites are in place, users can locate and access logs.

- Speed: Speed is another hiccup regarding traditional log management of massive datasets. With a large volume of information collected from log servers for analysis and storage and the requirement to often manually parse this information accurately, effective log management takes considerable time. It is challenging to balance having a fast process and presenting the information in a well-organized manner. Achieving both simultaneously can be a challenge.

- Parsing accuracy: The next challenge is correctly parsing each log's information. Accuracy is crucial when analyzing a system event log, and each line of information must be parsed correctly. While various tools are available to help with this task, it often requires manual administration. Additionally, if important information is missing, it must be manually traced and investigated to understand why it was not included in the logs. These difficulties make information parsing a significant challenge for IT administrators, who must create specific rules and criteria before beginning the parsing process.

- Automation: While much of the log collection process can be automated, it is essential to have human intervention to provide context and analysis to achieve comprehensive monitoring and establish automated remediation. Expert intervention and the implementation of AIOps capabilities are necessary to ensure the system can learn and improve performance over time, avoid false alerts, and increase accuracy levels. It may seem ironic, but timely human intervention is required to automate logs successfully.

Best practices for accurate log analytics

Here are some areas to look into to enhance log analytics:

-

Use the same resource group for all the workspaces in the environment: To simplify workspace management, use the same resource group for all workspaces. This allows for easy identification of the purpose of each workspace and simplifies the deletion of unused workspaces.

-

Keep a short data retention period: To save costs and facilitate data querying, keep a short data retention period in the workspace. Storage providers generally charge based on the amount of storage used, so deleting unnecessary data is cheaper than keeping it.

-

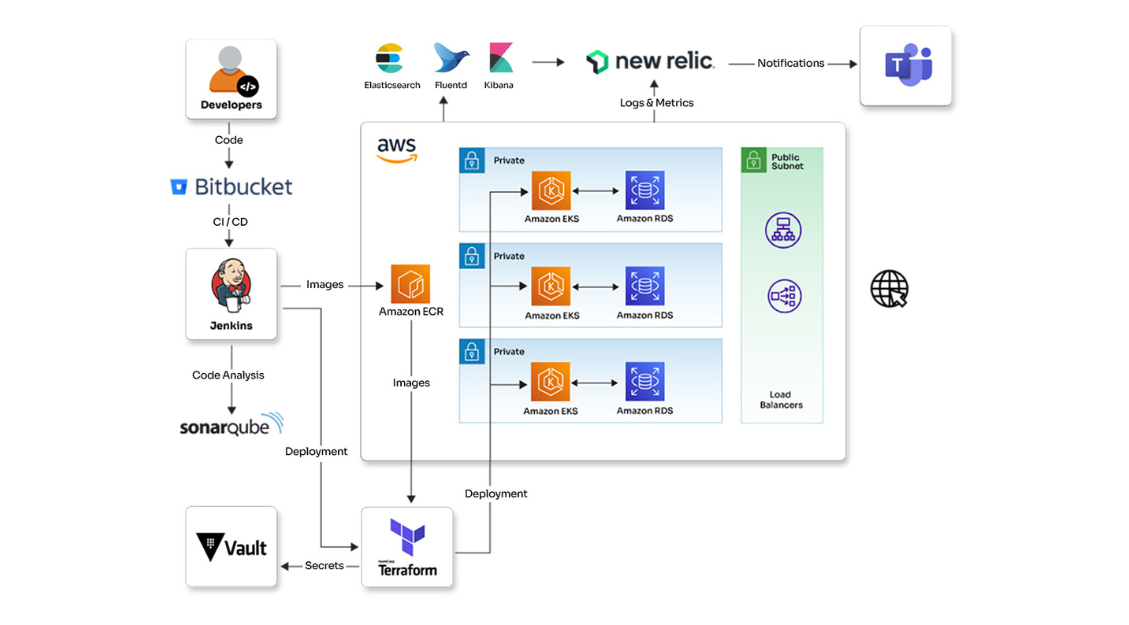

Centralized logging and log aggregation: To efficiently manage and analyze log data, it's crucial to centralize all log data into one shared pool separate from the production environment. This facilitates organized management and enables cross-analysis and identification of correlations between various data sources. By centralizing log data, organizations can mitigate the risk of log data loss in an auto-scaling environment while improving the quality of the information derived.

-

Use logging proactively: Logging should be used for troubleshooting and proactively analyzed to identify and mitigate errors before users notice them. Even when things are going well, analyzing logs influences future product development efforts and prevents neglecting opportunities to improve system performance. Many teams only refer to logs when there is an issue, but this approach misses an opportunity to identify and prevent errors early.

-

Keep log messages descriptive: It is essential to keep log messages descriptive to make debugging easier. Debugging can get daunting without helpful descriptions that provide information about what happened, where, when, and who or what was affected. Creating descriptive and comprehensive logs enables easy pinpointing of issues and quick resolution.

-

Use unique identifiers: Using unique identifiers is another excellent way to aid painless debugging, support, and analytics. They allow you to track particular user sessions and pinpoint actions taken by individual users. This can provide a breakdown of the user's activity, making it easier to trace an entire transaction and understand the cause of their issues for customer support.

-

Implement end-to-end logging: Implementing end-to-end logging is crucial to gain a comprehensive view of application performance and improve user experience. It involves monitoring and logging all relevant metrics and events from system components, application layers, and end-user clients. End-to-end logging allows you to see how problems develop and spread across the system, so that you can react in time to mitigate risks. It also gives an end user's point of view of understanding of systems' performance while considering factors like page loading times and network latency.

-

Avoid logging sensitive or non-essential data: To avoid increased costs and time-to-insights, it is crucial only to log essential diagnostic information and avoid non-essential data. Logging sensitive information such as PII or proprietary data can also result in data privacy and security violations. Using unique identifiers instead of logging personal information can effectively correlate events by session or user.

Logging for success

In conclusion, better log analytics is crucial for optimizing application security monitoring and ROI over time. The decision is no longer whether to log; instead, how and what to log have become important factors. By following logging best practices, the data produced by your devices and network help in better decision-making to satisfy business purposes.

Opcito prioritizes strict log management practices, ensuring logs are precise, concise, up-to-date, and readily available to anyone within the team. Our engineers are nurtured with the culture of following logging best practices. These practices have helped us troubleshoot problems in the past, enhance security, save time, effort, & costs, and gather better insights data for better decision-making. I hope this blog provides valuable insights to help you get started on your logging journey.