The word Cloud has been ruling the technology world for more than a decade now, and when I interact with clients from different segments of industries, I see people who want to work on-prem along with cloud environments. To be honest, I don’t blame them. Most of the time the reason is existing heavy investments in the on-prem infra. Even at Opcito, we have been using this combination to develop state-of-the-art applications for our clientele. But this rigid approach is resulting in complex environments which are becoming difficult to manage. On top of this, when it comes to the cloud, we are spoilt for choices be it AWS, Azure, IBM, Alibaba, or Google the list is endless. Speaking of Google, the 2019 edition of Google Cloud Next concluded last month, and one particular release was the talk of the town - Anthos.

Anthos is basically a service for hybrid cloud and workload management that runs on the Google Kubernetes Engine (GKE), and apart from the Google Cloud Platform (GCP), you will be able to manage workloads running on third-party clouds like AWS and Azure. This simply means now you can enjoy the cloud you like for your application deployment and management needs. Your admins and developers don’t need to learn all the new APIs and environments functionalities that come with a new cloud but only Google’s. Based on Cloud Services Platform that was announced last year, Google is making Anthos’ hybrid cloud functionality generally available for GCP with GKE and on data centers with GKE on-prem. Anthos can now collaborate with third-party cloud services providers, including Google’s mighty competitors, AWS and Azure. Sounds interesting, yeah? But is that all? Well, certainly not! Google has evolved Anthos to modernize applications by containerizing legacy applications. It has enhanced the overall security of on-premise as well as cloud infrastructures. Anthos is fully equipped to manage hybrid clouds.

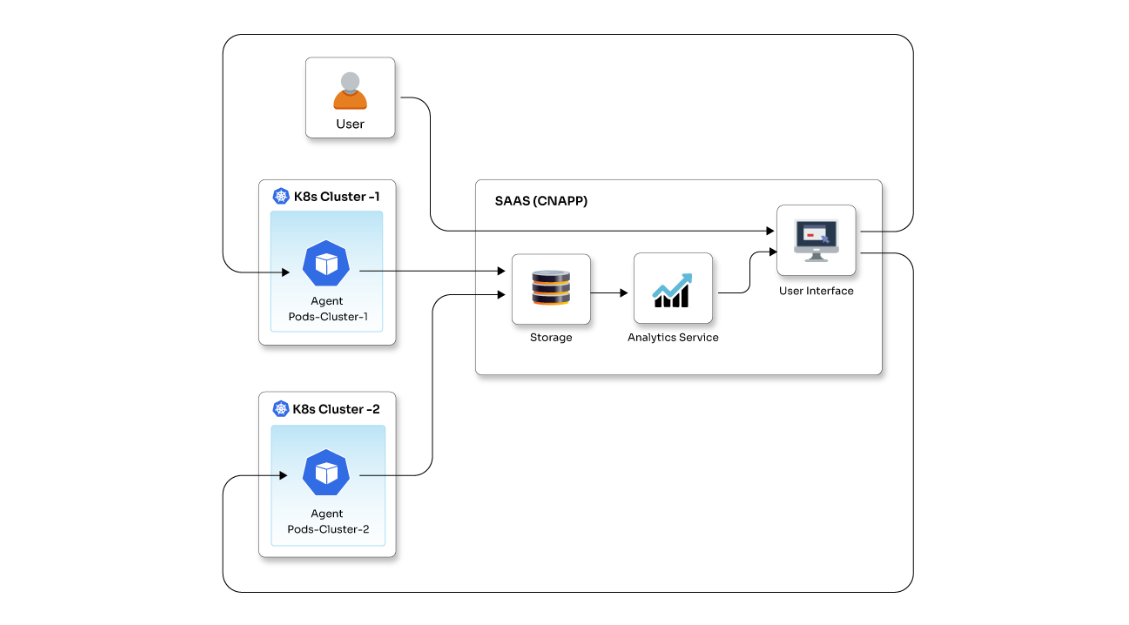

Let’s see what are the actual building blocks that are making Anthos an exciting prospect for developers and administrators -

Google Kubernetes Engine

Google Kubernetes Engine is the heart of Anthos. GKE takes care of most of the critical and time-consuming activities in an efficient way, such as managing clusters and dependent applications, monitoring applications and fixing most of the flaws, and switching applications between on-prem and cloud. GKE allows you to authorize using your Google account and reserves IP addresses using Google Cloud VPN. You can allocate RAM and ROM for a cluster, and it can also scale the deployment up or down depending on the memory requirements. Stackdriver Logging and Stackdriver Monitoring facilitate gaining actionable insights into application functionality. It supports the Docker Container format that integrates namespaces, control groups, and UnionFS. Google Site Reliability Engineers ensure the availability of a cluster whenever required. It runs on Container-Optimized OS that is specially designed for Kubernetes. It also has a built-in dashboard that manages resources.

GKE On-Prem

GKE On-Prem is specifically designed and developed for on-premise deployments, and with the Cloud Console, you can manage your on-premise clusters. It allows you to integrate the benefits that Kubernetes provides for cloud environments into your data center. Google will take care of the K8s version upgrades and security patches. It eliminates the need for VPNs while connecting co-prem clusters to the GCP. Cloud Identity controls cluster access, whereas the GCP Console offers a dashboard that can be used to manage resources. Stackdriver Logging and Stackdriver Monitoring can be implemented to evaluate the cluster considering various parameters.

It’s important to note that GKE On-prem runs as a virtual appliance on top of VMware vSphere 6.5. The support for other hypervisors, such as Hyper-V and KVM, is a work in progress. We might hear back on this soon.

Google Cloud Platform Marketplace

Kubernetes Applications are created with the aim to develop and deploy ready-to-use containerized applications. Google has created a marketplace where you can get these open-source applications as well as curated ISVs. The products range from databases to lifecycle management tools that can run on Anthos, in the cloud, on-premise, and in other environments that involve Kubernetes clusters. With ready-to-use containerized solutions, they enable better time management by making it possible for users to invest more time into development rather than setups. It also offers free versions of some of the applications included. These applications are highly secure and come with efficient maintenance & support services.

Anthos Config Management

If you are working with multiple Kubernetes deployments across environments, Anthos config management will be a key tool for you. With Anthos Config Management you can apply configuration and maintain multiple clusters at the same time. It enables rapid app development across hybrid container environments. A central Git repository manages access & policy controls and ensures efficient enforcement. It also provides top-class security to developers through a consistent environment. It is well-equipped to manage multiple clusters simultaneously using Kubernetes configuration formats, including YAML and JSON. In Anthos Config Management, different quota levels can be allocated to staging and production resources. This simplifies the process of configuring policies for cluster groups.

GKE Hub

GKE Hub is a networking unit of Anthos. With GKE Hub, you can club GKE on-premise clusters with the Google Cloud Services Platform through its GCP console. Kubernetes cluster and workload-related data can be viewed from your GKE dashboards. The GKE hub lets you access this data from the Google Cloud Services Platform. Moreover, it will provide insights from this data and manage your cluster accordingly.

Istio

Istio is a service mesh connecting all the components like the database, GCP, and other third-party clouds. IT is primarily used to create clusters with ease and can also be used on an existing cluster. It is responsible for the management of microservices through load balancing, traffic management, cluster monitoring, and communication. It provides you with visibility into service behavior to get a better idea about its performance, along with insights into the application. It empowers the user with capabilities like setting up circuit breakers, timeouts, retries, and traffic splits. It is also with active & passive health checks and rapid failure recovery.

Google has an exceptional market presence and thus supports a broad spectrum of hardware & software platforms and applications through collaborations with some of the leaders in this space viz. Cisco, Dell, HP, VMware, MongoDB, Elastic, and GitLab, among others. Google is planning to take on its competitors, Microsoft, and Amazon with Anthos that allows you to use all its features once you move your data from any other platform that you may be using presently to Google’s platform.

The only thing I am a little skeptical about is the pricing model and the expertise required to manage the Google environments. Firstly, the model is not “pay-as-you-go” which I generally prefer. Google will charge USD 10,000 for a block of 100 vCPUs. If your requirement exceeds 100 vCPUs, you will be prompted to add another block of 100 vCPUs and you will have to pay an additional USD 10,000 and so on. The good thing is the pricing will remain the same irrespective of where your workloads are running, GCP, or the likes of AWS or Azure.

Coming to the expertise part, you will have to make your workforce well-versed with Kubernetes, GCP environments, and other Google applications to make the most out of Anthos. Clearly, Google is creating a sustainable market for them with Anthos, but if it is saving you from the hybrid environment management headaches and late night shifts to maintain your on-prem, I will suggest you go for it.